This article discusses the approaches to addressing the implementation challenges of the EU AI Act.

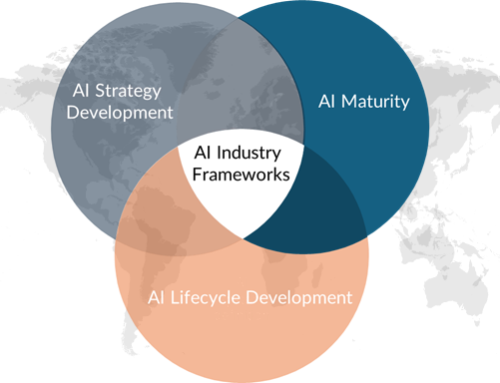

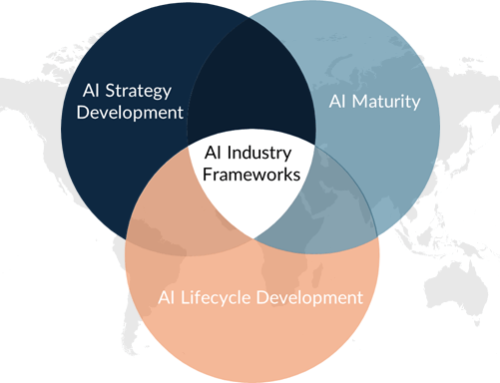

In the previous article, I described how organizations can align their governance practices with several industry frameworks to support compliance with the EU AI Act. The discussion triggered many thoughtful responses from readers. A recurring theme in those conversations was clear: once organizations understand the frameworks, the next question quickly follows — how do they overcome the practical challenges that appear during implementation? This article explores those challenges and possible ways to address them.

When organizations first look at the EU AI Act, the regulation itself may appear relatively clear. The Act introduces a risk-based approach to governing artificial intelligence. Some systems are prohibited. Others are classified as high risk and require strict oversight. Many fall into lower-risk categories but still require transparency.

On paper, the logic seems straightforward.

In practice, however, organizations quickly discover that implementation is far more complicated. AI rarely appears as a single, clearly identifiable system. Instead, it tends to be embedded in analytics tools, operational platforms, customer applications, and internal decision-support systems. In many organizations, these capabilities evolved gradually, often without being formally recognized as “AI systems” for governance purposes.

For this reason, compliance with the EU AI Act cannot be achieved through legal interpretation alone. It requires organizations to develop practical governance mechanisms, integrate them with existing processes, and create visibility across their digital landscape.

When organizations begin preparing for the Act, several recurring challenges usually emerge.

Understanding the Implementation Logic Behind AI Governance Challenges

When I began analyzing the implementation challenges of the EU AI Act—drawing on both my own practical experience and publicly available materials—I noticed an interesting pattern. These challenges rarely appear randomly. Instead, they tend to emerge in a fairly consistent order as organizations gradually build their AI governance capabilities.

The process often begins with a simple but critical step: understanding where AI systems exist across the organization. Once these systems are identified, the next challenge involves determining their regulatory risk levels. Only after this visibility is established can organizations integrate governance responsibilities into existing structures, embed risk management into the AI lifecycle, develop documentation and traceability practices, and clarify accountability for AI oversight.

This sequence closely reflects the structure of formal AI governance frameworks such as ISO/IEC 42001, which organize AI governance around system identification, risk management, governance structures, operational controls, documentation, and clearly defined responsibilities. From this perspective, the challenges discussed below can also be understood as the practical steps organizations encounter when establishing an operational AI governance framework.

Challenge 1: Identifying AI Systems Across the Organization

The first challenge organizations encounter is often the most fundamental: understanding where AI actually exists within the organization.

Many companies do not maintain a structured inventory of AI systems. AI capabilities often emerge through analytics initiatives, product development, or operational optimization projects. Over time, predictive models, recommendation engines, and automated decision tools become embedded within broader software environments.

In some cases, teams may not even refer to their solution as artificial intelligence. A predictive model for forecasting customer churn might appear as a feature within a marketing platform. Technically, it is an AI system. Organizationally, however, it may never have been formally documented.

Without this visibility, organizations face an immediate governance gap. It becomes impossible to determine which systems fall under the scope of the EU AI Act.

Addressing this challenge

The starting point is establishing an AI system inventory.

Most organizations do not need to build a completely new mechanism for this. Existing governance tools—such as application registries or data asset catalogs—can usually be extended to capture AI-related information. Teams can begin by documenting systems that use machine learning, automated decision logic, or predictive analytics that influence business processes.

The objective at this stage is not perfect classification but simply gaining visibility.

In one financial services organization, this process began by adding a small set of questions to the existing application registry. System owners were asked whether their application used machine learning, supported automated decisions, or influenced customer or employee outcomes. Within a few weeks, the organization discovered several AI components that had never appeared in governance documentation before.

Challenge 2: Determining Whether an AI System Is High-Risk

Once organizations begin identifying their AI systems, the next question quickly follows: which of these systems qualify as high risk under the EU AI Act?

Risk classification depends not only on the technology itself but also on how the system is used. AI supporting recruitment, credit decisions, healthcare processes, or access to essential services may be considered high risk.

In reality, classification is rarely straightforward. Many systems support multiple processes or evolve. A model initially developed for internal analytics may eventually influence operational decisions or customer interactions.

Without clear governance guidance, different teams may interpret the criteria differently, leading to inconsistent classification decisions.

Addressing this challenge

Organizations can address this challenge by introducing a structured risk classification process.

Instead of leaving interpretation entirely to project teams, a governance mechanism can guide evaluation using a small set of consistent criteria. These typically include the system’s purpose, the stakeholders affected by its decisions, the degree of automation, and the potential impact on individuals or business operations.

This does not require a complex bureaucratic procedure. Often, a short, structured assessment is sufficient to create consistency across the organization.

For example, a healthcare technology company introduced a brief AI risk questionnaire during project initiation. Product teams answered several questions about the system’s purpose and potential impact, and a small governance group reviewed the responses. This simple step ensured that classification decisions were made consistently across departments.

Challenge 3: Integrating AI Governance with Existing Data Governance

As organizations move beyond identification and classification, another issue quickly becomes visible: AI governance cannot operate independently from existing governance structures.

Most organizations already maintain governance frameworks for data management, privacy protection, cybersecurity, and enterprise risk management. AI systems depend heavily on these capabilities. Training datasets require quality oversight. Model development depends on reliable data lineage. Monitoring systems rely on operational metrics.

If AI governance is implemented as a completely separate structure, organizations often experience duplication, overlapping responsibilities, and confusion.

Addressing this challenge

A more effective approach is to extend existing data governance capabilities to include AI governance.

Data governance programs already address many foundational elements required for responsible AI, such as data ownership, metadata management, and data quality monitoring. By building on these structures, organizations can introduce AI-specific controls without creating entirely new governance layers.

In practice, this may involve expanding the responsibilities of data stewards or governance committees to include oversight of datasets used for model training.

A retail organization followed exactly this approach. Rather than creating a separate AI governance team, it integrated AI oversight into its existing data stewardship program. Data stewards responsible for critical datasets were asked to review datasets used in machine learning models, ensuring that training data met quality and governance standards.

Challenge 4: Embedding Risk Management into the AI Lifecycle

The EU AI Act requires organizations to manage risks throughout the entire lifecycle of AI systems, from design and development to deployment and ongoing monitoring.

In many organizations, however, governance attention focuses primarily on the development stage. Once a model is deployed, monitoring processes may become informal or inconsistent.

Over time, models may drift, data distributions may change, and system behavior may evolve. Without systematic oversight, organizations may lose visibility into how models perform in production.

Addressing this challenge

To address this challenge, organizations often introduce governance checkpoints across the AI lifecycle.

These checkpoints ensure that risk considerations are reviewed at critical stages, such as during model development, before deployment validation, and for significant model updates or retraining.

The objective is not to slow innovation but to make risk management part of the operational process.

A logistics company implemented a simple rule to achieve this. No AI model could move from testing to production without a short governance review confirming that training data quality, evaluation metrics, and potential risks had been documented. The review typically took less than an hour but ensured that governance considerations remained visible.

Challenge 5: Ensuring Documentation and Traceability

Another major challenge emerges when organizations examine the documentation requirements of the EU AI Act.

High-risk AI systems must be supported by detailed technical documentation. Organizations must be able to demonstrate how a system was designed, which datasets were used, how models were evaluated, and how risks were mitigated.

In many AI development environments, however, documentation practices struggle to keep pace with rapid experimentation and iterative development.

As a result, reconstructing a model’s history or understanding how a particular decision was reached can be difficult.

Addressing this challenge

The most sustainable solution is to integrate documentation into AI development workflows.

Many model development platforms already automatically capture information about datasets, training runs, and evaluation results. By using these tools effectively, organizations can maintain traceability without requiring engineers to produce extensive manual documentation.

One technology company introduced a model management platform that automatically recorded dataset versions, model parameters, and evaluation metrics. Engineers continued their normal development work, but the platform created a clear history of model evolution. When governance teams requested documentation, the required information was already available.

Challenge 6: Establishing Clear Accountability

Finally, organizations must address a governance question that often proves difficult in practice: who is responsible for AI systems?

AI initiatives usually involve multiple teams. Data scientists develop models. IT teams manage infrastructure. Business units deploy the solutions. Compliance teams interpret regulatory requirements.

Without clear accountability, governance tasks can easily fall between organizational boundaries.

Addressing this challenge

Organizations typically address this challenge by defining clear ownership roles for AI systems.

This often mirrors traditional IT governance. Each system has a business owner responsible for outcomes, a technical owner responsible for model performance, and a governance function responsible for oversight.

One insurance company formalized this structure by introducing the role of AI system owner, similar to application ownership in IT governance. This individual was responsible for ensuring that documentation, monitoring, and compliance activities were maintained throughout the system’s lifecycle.

Turning Regulation into Operational Governance

The EU AI Act introduces one of the most comprehensive regulatory frameworks for artificial intelligence. For organizations, however, compliance ultimately becomes an operational challenge rather than a purely legal one.

Organizations must first understand where AI systems exist, determine their risk levels, integrate governance with existing data management capabilities, embed risk management into development processes, and establish clear accountability.

In this sense, implementing the EU AI Act is less about interpreting regulatory language and more about building governance structures that enable responsible AI deployment in everyday operations.

While the EU AI Act introduces one of the most comprehensive regulatory frameworks for artificial intelligence, organizations rarely operate within a single regulatory environment. Companies developing or deploying AI systems often face multiple regulatory expectations across different regions.

The next article in this series, therefore, explores how organizations navigate AI governance within another important regulatory landscape: the United Kingdom’s principles-based approach to AI oversight.